Unstructure Note for LLM and AI agent

Solutions

- Unstructure Note for LLM and AI agent

- Tools

- LLM Model

- Terms

- RAG (Retrieval-Augmented Generation)

- Vector Databases

- Models

- Fine Tuning

- RLHF and Human Value

- Reinforcement Learning from Human Feedback (RLHF)

- Workflow

- How is Reward Model training data created from Human Feedback

- Proximal Policy Optimisation (PPO)

- How to avoid Reward Hacking

- Scaling Human Feedback : Self Supervision with Constitutional AI

- Chain of Thought (for Reasoning)

- Program-aided Language Models (PAL)

- ReAct : Reasoning and Action

- Responsible AI

- Deployment

- Update

Tools

- LangChain – the most widely used framework with a massive ecosystem. Provides abstractions for: Models, Tools, Memory, Chains, Agents, Retrivers, Vector stores.

- LangChain provides modular components for working with LLMs in applications. E.I. prompt templates for various use cases, memory to store LLM interactions, tools for working with external datasets and APIs, Pre-built chains optimised, and Agents (PAL, ReAct).

- is a framework for developing applications powered by large language models (LLMs).

- LangGraph – the next evolution for production-grade agent control. solving the limitation of LangChain; agent control flow, LangChain is linear, Graph-based.

- Ollama – Run powerful LLMs locally on your own hardware with a single command.

- Langflow – A drag-and-drop visual builder for designing and deploying AI agents and RAG workflows.

- CrewAI – role-based multi-agent collaboration. with 3 core abstractions: agents, tasks, crew.

- AutoGen – conversational multi-agent systems by Microsoft. for building conversation, collaborative, and autonomous multi-agent system.

- Agno – lightweight and performance-focused framework.

- LlamaIndex

- knowledge/data-centric agents framework, designed to connect LLM agents with structured and unstructured data. act as knowledge orchestration.

- is a simple, flexible data framework for connecting custom data sources to large language models (LLMs).

- vLLM – an open-source for LLM serving performance.

- using virtual memory for the KV cache, vLLM only allocates physical GPU memory as needed, eliminating memory fragmentation and avoiding pre-allocation.

- Flowise – visual agent orchestration framework using no-code

- n8n – agent orchastration that work as a node-based workflow automation system where each performs an operation

- Relevance AI – enterprise-focused agent with capabilities in knowledge integration, workflow automation, and operational decision-making with focuses specifically on business operation.

- OpenClaw – The always-on personal AI agent that lives on your device and talks to you through WhatsApp, Telegram, and 50+ other platforms.

- Open WebUI – A self-hosted, offline-capable ChatGPT alternative

- Docling - Document parser for LLM-ready

- FAISS ( Facebook AI Similarity Search) – a library that allows us to quickly search for multimedia documents that are similar to each other, and thus it acts like a Vector Database.

LLM Model

- Open-source LLMs

- LLaMA

- Mistral

- Qwen

- Gemma

- TinyLlama

- Phi

- Deepseek

- API-based models

- OpenAI

- Custom huggingface + FastAPI

- Model orchestration

- Ollama

- vLLM

- Custom huggingface + FastAPI

Terms

-

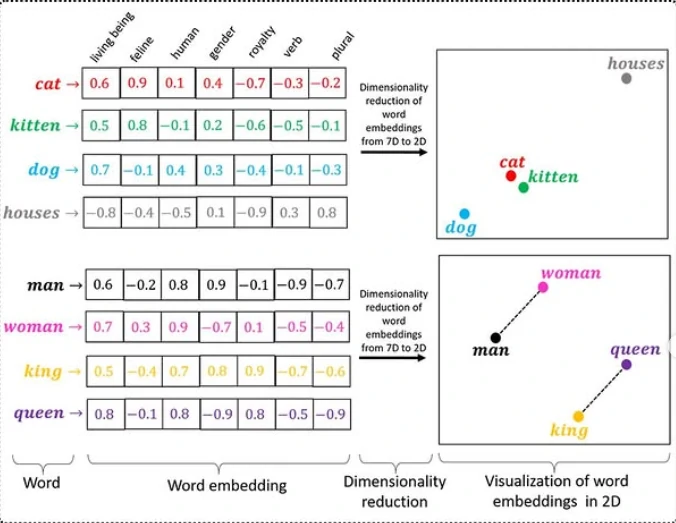

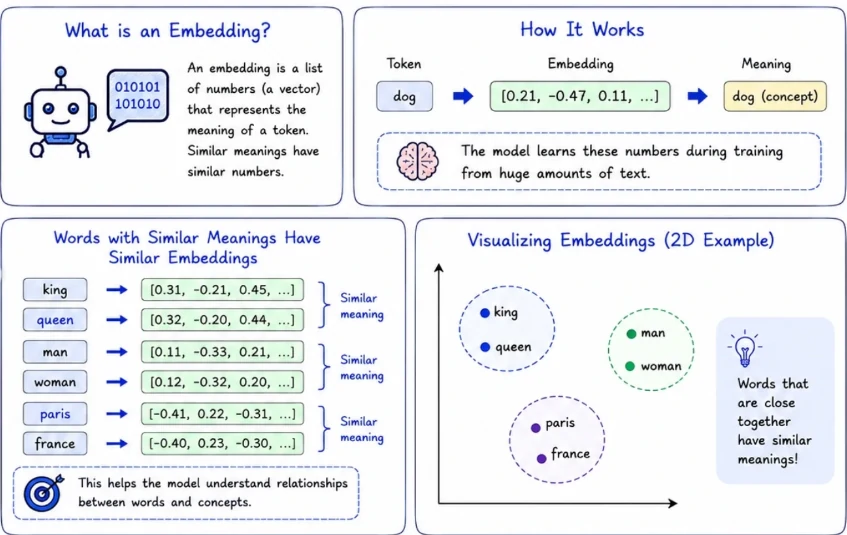

Vector Embedding: is a list of floating-point numbers that represents the meaning of a piece of data, not its characters or keywords. The numbers are produced by a machine learning model trained to place semantically similar content close together in a high-dimensional numeric space.

- Embedding model: would place their vectors near each other in that space. That proximity is what makes similarity search work: you embed the user’s query, find the stored vectors closest to it, and return those rows.

- Prompt: The natural language instruction in which we interact with an LLM

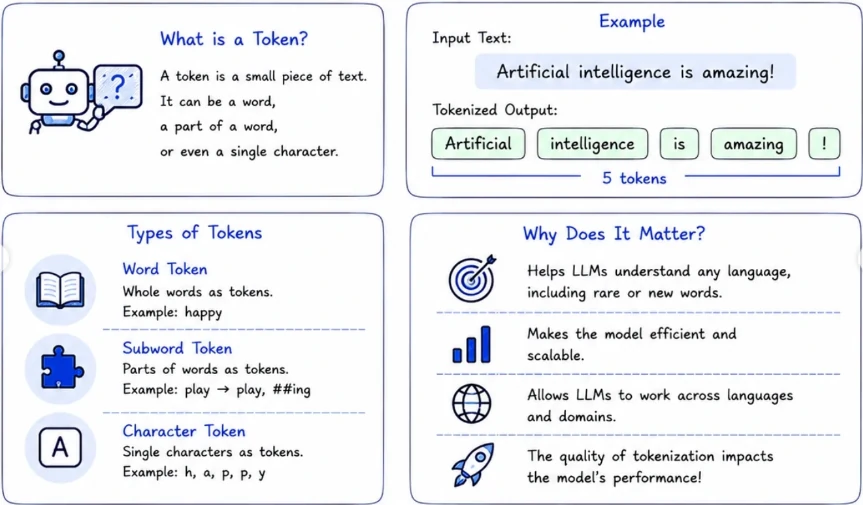

- Token: is a small piece of text, it can be a word, a part of a word, or a single character.

- word token: whole words as tokens.

- subword token: Parts of the words as tokens.

- character token: Single characters as tokens.

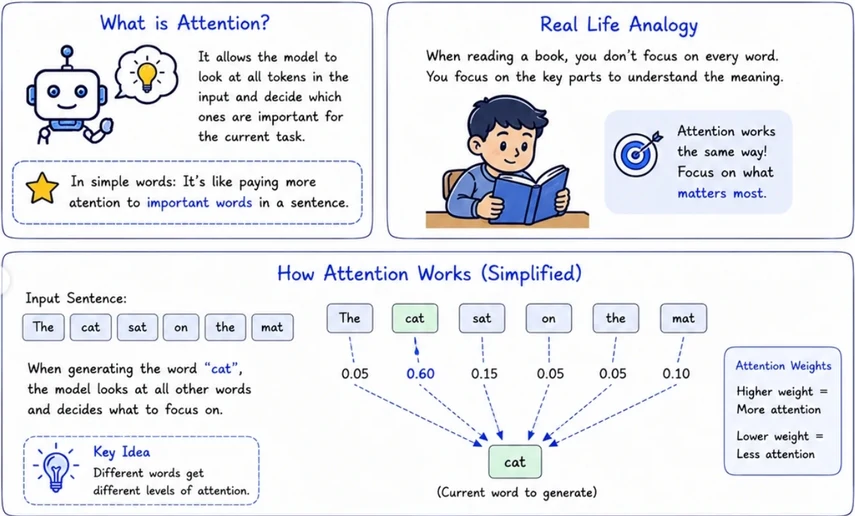

- Attention: It allows the model to look at all tokens in the input and decide which ones are important for the current task.

- Self-Attention: Each word in the sentence pays attention to all other words in the same sentence.

- Cross-Attention: Used in models like encoder-decoder. Decoder pays attention to the encoder output.

- Multi-Head Attention: The model learns multiple attention patterns in parallel to understand different types of relationships

-

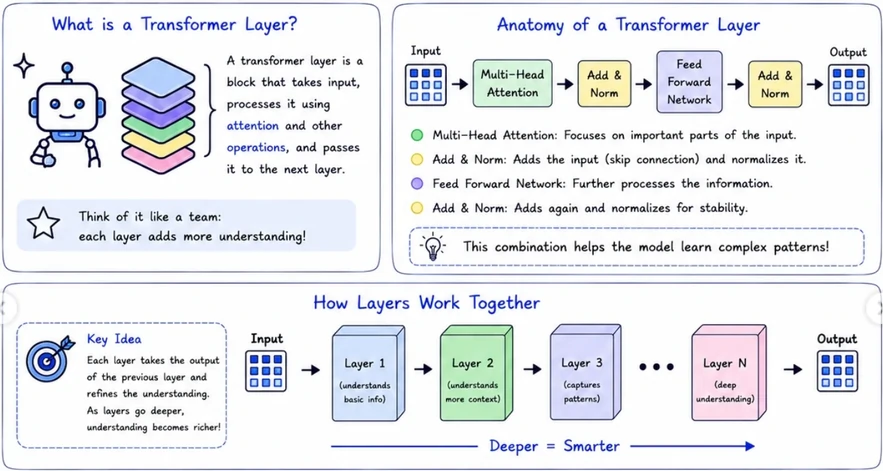

Transformer layer: is a block that takes input, processess it using attention and other operations, and pass it to the next layer.

- In context learning: The inferencing that an LLM does and completes the instruction given in the prompt.

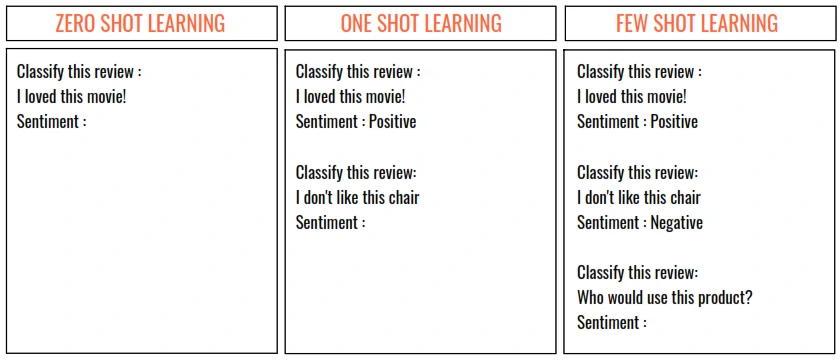

- Zero Shot Learning: The ability of the LLM to respond to the instruction in the prompt without any example.

- One Shot Learning: When a single example is provided

-

Few Shot Learning: If more than one examples in provided

- Context Window: The maximum number of tokens (“memory”) that an LLM can provide and inference on. This includes both the prompt and the response. THe model can only see what’s inside the window and drop the old one (sliding window). This will:

- Limits how much information the model can consider.

- Affects the model’s ability to understand long conversations.

- Improtant for tasks like summarization, code analysis, RAG, etc.

- Bigger context window = better performance on complex tasks.

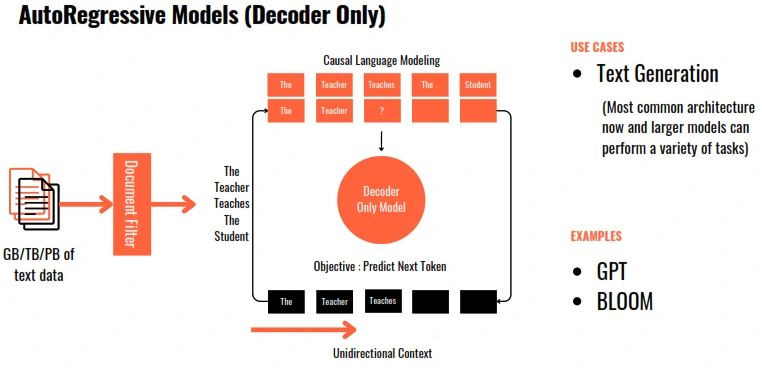

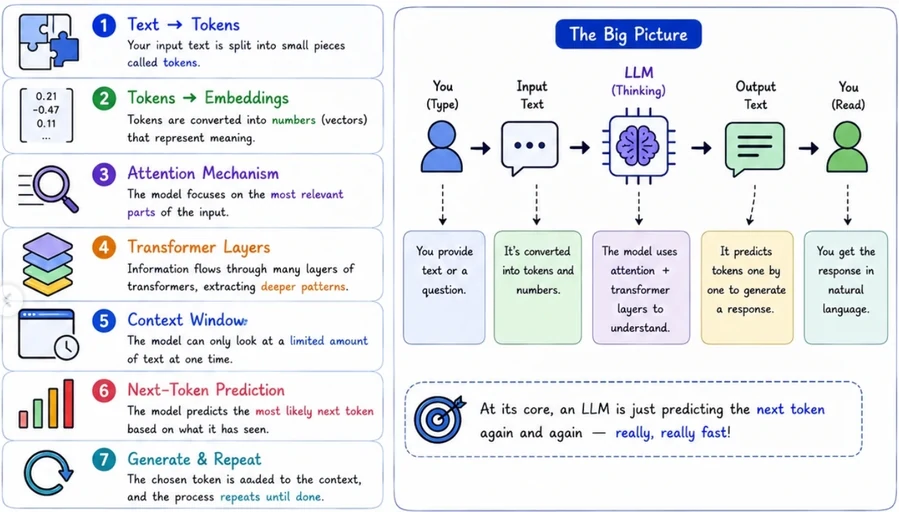

- Next-Token Prediction: Model predics the most likely next token one step at a time. The model doesn’t know the “truth”. It just picks the most likely token based on patterns it learned.

- Max Tokens: A aparameter to adjust the number of tokens to be used for a particular request. This is capped to the context window.

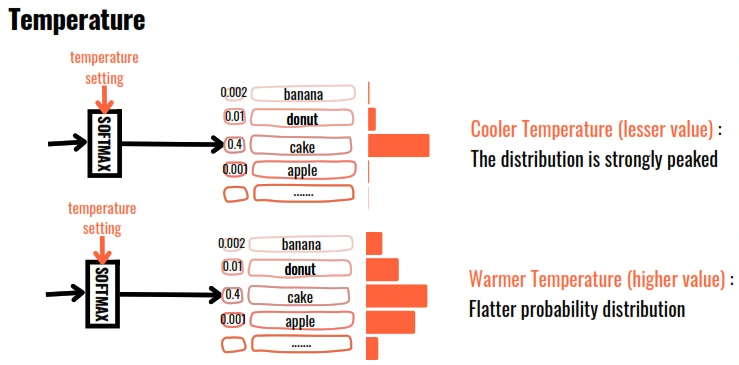

- Temperature: LLM outputs area based on the probability of the generated word. Temperature controls the ramdomness of generation. The higher the temperature, the more random is the generation. It means:

- low (0.0): More focused, deterministic and predictable.

- medium (0.7): Balanced creativity and accuracy.

- high (1.5): Mode creative, random and diverse.

- Hallucinate: LLMs are incredibly good at generating text, but they don’t actually “know” facts. They predict the most likely next words based on patterns in their training data. It happens when:

- Limited knowledge from what model trained on.

- No real-time awareness and real-time information.

- The Model predicts what sounds most likely, an pattern prediction, not truth.

- THe model can be very confident even when the information is completely wrong.Confidence is not accuracy.

- Top P / Top N: Since LLM outputs are based probability, setting a Top Parameter will restrict the selection of next word form the Top ‘N’ most probable words or Top words summing up to probability ‘P’.

- Top P: The word/token is selected using random-weighted strategy but only from amongst the top words totalling to probability <=P. (for P=0.33 => cake:0.2,donut:0.1)

- Top N: The word/token is selected using random-weighted strategy but only from amongst the Top ‘N’ words/tokens. (for N=3 => cake:0.2,donut:0.1,banana:0.02)

- Penalty: Adjust this factor to reduce thje repetition of tokens in the generated output. Penalty adds a negative weight to tokens that have previously been generated.

- Greedy: in output softmax layer, The word/token with the largest probability is selected.

- Random Sampling: in output softmax layer, The word/token is selected using random-weighted strategy.

RAG (Retrieval-Augmented Generation)

- Combines information retrieval with a language model to generate accurate, relevant, less hallucination, private-secure and up-to-date answers.

- Instead of relyinh only on what the model was trained on, RAG lets the model look up information from trusted sources before generating a response.

- Retrieves more accurate, contextually appropriate, relevant data from a knowledge base

- Uses an LLM to generate a response based on that context

- RAG systems augment LLM model’s generative capabilities with real-time retrieval of information, ensuring responses are fluent, factually grounded, and relevant.

- Implementing RAG involves considerations such as the size of the context window and the need for data retrieval and storage in appropriate formats

- Core components:

- Embedding generator

- Retriever module

- Prompt Constructor

- Generator (LLM model)

RAG Pipeline

- Tech side

- Raw docs

- Document Processing

- Chunking

- add metadata

- Embedding generation each chunk

- Vectors and original text stored at each DB

- User side

- Query Processing

- Query Embedding

- Retrieval module

- Hybrid search (Similarity)

- Maximum Marginal Relevance (MMR)

- Top-k chunks

- BM25 Search (Top-30)

- Vector Search (Top-10)

- RRF Fusion (up to Top-40)

- Reranker

- Jina Cross-Encoder Scoring and top-10 Results

- Most Diverse

- Top-k chunks

- Context Formatting

- Organize with Metadata

- Apply instructions (format, tone, constraints)

- LLM model

What RAG system do:

- Chunk documents

- Turns documents into searchable vectors (embed chunks)

- Finds information using semantic search (retrive top-k chunks)

- Sends relevant context to the LLM

- Generates accurate answeres from the data

RAG type

| Type | Details | Examples |

|---|---|---|

| Standard | Basic retrieval + context for Q&A | answering questions from documents (fact-based Q&A, knowledge base assistants) |

| Fusion | multiple queries → better retrieval results | combining multiple retrieval strategies for better coverage (large-scale enterprise search) |

| Corrective | verifies or fixes responses | reducing hallucinations and fixing incorrect outputs (high-stakes domains like legal or healthcare) |

| Agentic | step-by-step reasoning with tools | automating workflows (research assistants, task automation, multi-step reasoning) |

| Modular | flexible pipeline (swap components) | building flexible systems that can be customized per use case (AI platforms, SaaS tools) |

| Multimodal | works with text, images, etc. | working with images, audio, and text together (medical reports, visual QA systems) |

| Graph | understanding relationships in structured data (fraud detection, knowledge graphs) | |

| Hybrid | improving search accuracy in enterprise systems (combining keyword + semantic search) | |

| Multi-query | handling vague or ambiguous user queries (customer support bots) | |

| Re-ranking | improving result quality in search engines and recommendation systems |

Techical

| Name | Details | Examples |

|---|---|---|

| Document crawler | - Scrapy ✅ - BeautifulSoup ✅ | |

| Document parsing | - PDF corpus | - pdfplumber ✅ - Docling ✅ - Crawler logic ✅ - Camelot - Tabula |

| Tokenization | Convert text into numerical or basic text as tokens | - SentencePiece - GPT’s BPE tokenizer |

| Embedding | - Convert the text into vectors - Converting text into vector representations - Captures the semantic meaning of the data - Make documents searchable using similiarity | - OpenAI - text-embedding-3-* - Azure OpenAI - DashScope - text-embedding-v3 - Bedrock - amazon.titan-embed-text-v2 - Vertex AI - text-embedding-005 - Voyage AI - voyage-3 - Cohere - embed-english-v3.0 - SiliconFlow - BAAI/bge-large-zh-v1.5 - Hugging Face - Any TEI-served model ✅ |

| VectorDB | - Pecific database to store vectors - Allows fast semantic search | - ChromaDB - Milvus ✅ - DynamoDB - OpenSearch - Qdrant - pgvector ✅ - Pinecone - Weaviate |

| Sematic Search | - FAISS ✅ | |

| Reranking Function | - Weighted Ranker ✅ - RRF Ranker ✅ - Boost Ranker ✅ - Decay Ranker ✅ - Model Ranker ✅ | |

| Reranking Model | - TEI ✅ - Cohere - Voyage AI - SiliconFlow | |

| LLM Model | - GPT - T5 ✅ - LLaMA ✅ - Falcon - Mistral ✅ - Phi ✅ - Gemini | |

| Model Alignment | - Supervised Fine-Tuning (SFT) - Reinforcement Learning from Human Feedback (RLHF) - Safety & Constitutional AI | - Train on high-quality human-annotated datasets (InstructGPT, Alpaca, Dolly) - Generate responses, rank outputs, train a Reward Model (PPO), and refine using Proximal Policy Optimization (PPO) - Apply RLAIF, adversarial training, and bias filtering |

| Model Optimization | - Compression & Quantization - API Serving & Scaling - Reduce model size with GPTQ, AWQ, LLM.int8(), and Knowledge Distillation - Deploy with vLLM, Triton Inference Server, TensorRT, ONNX, and Ray Serve for efficient inference | - FastAPI ✅ - vLLM ✅ |

| Model collection | - Hugging Face ✅ | |

| Pretraining | - Causal Language Modeling (CLM) with Cross-Entropy Loss - Gradient Checkpointing - Parallelization (FSDP, ZeRO) | |

| Optimizations | - Apply Mixed Precision (FP16/BF16) - Gradient Clipping - Adaptive Learning Rate Schedulers for efficiency | |

| Evaluation & Benchmarking | - Benchmarking performance - Red-Teaming - Adversarial Testing | - HumanEval - HELM - OpenAI Eval - MMLU - ARC - MT-Bench |

| Orchestrator | - Orchestrated conversation flow & intent detection | - LangChain ✅ - LangGraph ✅ - LangSmith - LlamaIndex ✅ - Ollama ✅ - vLLM ✅ - Azure AI Foundry |

| AI Agents | - Microsoft Autogen - Agno - OpenAI Agent Kit - CrewAI |

LLM Aided Retrieval - Optimizing RAG

- Chunking Strategy

- Hybrid Search: (combination of traditional keyword retrieval (BM25) + dense (vector-based) retrieval)

- Metadata Filtering: use tag, source, type, date, etc.

- Prompt Engineering

- Caching Results: speeds up repeated queries

- Multi-hop RAG: breaks complex queries into sub-questions and retrieves and reasons across RAG.

- Query rewriting: Improve vague user queries

- Self-RAG: Model checks its own output

- SelfQuery: where we use an LLM to convert the user question into a query.

- Core component: information » Query Parser » Search term + Filter

- Compression: Using LLM we could increase the number of results we can put in the context by shrinking the responses to only the relevant information.

RAG Chunking

- Chunking is the pre-processing step of splitting texts into smaller pieces, or “chunks.” Each chunk is the unit of information that is vectorized and stored in a vector database.

- Chunking decides what knowledge your system is allowed to see.

- Chunking affects both the information retrieval and the amount of contextual information provided. Get it wrong, and you’re either missing crucial context or drowning in irrelevant noise.

- If you split text by token count, you’re not building retrieval. You’re breaking meaning.

-

When chunking is wrong, no vector database or reranker can save you.

- Real RAG chunking is about:

- Preserving ideas, not lines

- Respecting document structure

- Using semantic boundaries instead of arbitrary cuts

- Adding overlap so context doesn’t vanish

- Treating every chunk as a standalone knowledge unit

- When chunking is right:

- Retrieval improves

- Hallucinations drop

- Answers become precise

- Costs go down

- When chunking is wrong:

- Retrieval fails

- Hallucinations increase

- Context gets fragmented

- Token costs explode

RAG Chunking Parameter

-

Chunk Size - Measured in token or characters.

Use Case Recommended Size FAQs 200-400 tokens Documentation 400-800 tokens Legal / Contracts 800-1200 tokens Code 200-500 tokens - Embedding lose precision after ~800 token

- Model gets polluted with noise

- Overlap - Chunks must overlap so knowledge isn’t cut.

- Overlap preserves:

- Definitions

- Cross-sentence logic

- References

- Typical overlap: 10-25%

- Overlap preserves:

- Chunk metadata

- it’s store:

- id

- document name

- section

- page

- token

- etc

- metadata enable:

- Filtering

- Source citation

- Page-level grounding

- Reranking

- it’s store:

Chunking Strategies

- Fixed-Size Chunking

- This technique splits the text into chunks of a fixed size, without considering natural breaks or the structure of the content.

- It’s simple and cost-effective, but lacks contextual awareness.

- Overlapping chunks can be used, allowing adjacent chunks to share some content.

- Recursive Chunking

- Text is initially split using a primary separator, like paragraphs.

- If the resulting chunks are too large, secondary separators, like sentences, are applied recursively until the desired chunk size is achieved.

- This technique respects the document’s structure and is flexible for various use cases.

- Sentence-Size Chunking

- Semantic Chunking

- In this technique, the text is divided into meaningful units, such as sentences, paragraphs or topic changes, which are then vectorized.

- These units are then combined into chunks based on the cosine distance between their embeddings, with a new chunk formed whenever a significant context shift is detected.

- using sentence embeddings, cosine similarity, break where similarity drops.

- This produces; concept-aligned chunks, self-contained knowledge blocks.

- Document-structure Chunking

- This technique creates chunks based on the natural divisions within the document, such as headings or sections.

- It’s very effective for structured data like HTML, Markdown, or code files but it’s less useful when the data lacks clear structural elements.

- Split by:

- Headers

- Sections

- Paragraphs

- Bullet groups

- use case for: Docs, Wikis, Policies, Research papers

- Hybrid Chunking

- Best practice:

- Split by document structure

- Inside each section, apply semantic chunking

- Apply size limits + overlap

- This creates:

- Logically coherent chunks

- Embedding-friendly size

- Retrieval-optimized knowledge blocks

- Best practice:

- LLM-Based Chunking

- This advanced technique uses a Language Model (LLM) to generate chunks.

- The LLM processes the text and generates semantically isolated sentences or propositions that can stand alone.

- While this method is highly accurate, it is also the most computationally demanding.

- Late Chunking (ColBERT)

- Inverting order of embedding:

Embed the entire document first, then chunk the embeddings. - This method utilizes a long context embedding model to create token embeddings for every token in a document. These token-level embeddings are then broken up and pooled into multiple embeddings representing each chunk in the text.

- maintaining the contextual relationships between tokens across the entire document during the embedding process, and only afterwards dividing these contextually-rich embeddings into chunks.

- This method can help mitigate issues associated with very long documents, such as expensive LLM calls, increased latency, and a higher chance of hallucination.

- Inverting order of embedding:

RAG Failure Root Cause

| Failure | Root Cause |

|---|---|

| Model makes things up | Missing chunk |

| Wrong answer | Chunk too small |

| Vague answer | Chunk too large |

| High cost | Over-long chunks |

| Wrong answer | Chunk too small |

| Low recall | Chunk boundaries break meaning |

Chuncking is bad when:

- ask about X, where X defined, who does X work?

- answers are: vague, half-correct

- missing details

Chunks size vs Retrieval Accuracy

| Chunk size | Retrieval | LLM Quality |

|---|---|---|

| Too small | High recall, low precision | Fragmented answers |

| Too large | Low recall | Irrelevent context |

| Just right | High recall + precision | Clean answers |

RAG Chunking for Specific data

- Tables

- stored as: CSV-like text or one chunk per table

- Code

- chunk by: Function, Class, file

- PDFs

- Page -> Section -> Paragraph

RAG + Graph

- Graph RAG treats the knowledge base as a network of entities.

- During indexing, an LLM (or NER pipeline) extracts triples (subject, predicate, object) from every document. Entities become nodes, relationships become edges, and the resulting knowledge graph is stored in Graph database. (Neo4j, Kuzu, TigerGraph).

- At query time, the system does hybrid retrieval: it identifies anchor entities from the query, traverses the graph 1-3 hops to collect connected context, and pulls relevant text chunks then merges both into the LLM prompt.

- “Find me facts that are connected to the question.”

- Retrieval: deterministic graph traversal + vector search

- Entities carry explicit relationships

- Multi-hop paths preserved across documents

- Answers can cite a traceable chain of evidence

RAG + Graph Comparison

| Dimension | Traditional RAG | Graph RAG |

|---|---|---|

| Retrieval mechanism | Cosine similarity over dense vector embeddings (ANN search) | Graph traversal (Cypher / SPARQL) + vector search as a hybrid step |

| Data representation | Flat text chunks embedded as n-dimensional vectors | Typed entities (nodes) + typed relationships (edges) with properties |

| Multi-hop reasoning | Weak — chunks retrieved independently, no logical chaining | Strong — k-hop traversal explicitly follows A→B→C paths |

| Cross-document linking | Only if the same entity appears semantically in both chunks | Native — shared entities create explicit edges across sources |

| Explainability | Low — retrieval is a black-box similarity score | High — retrieval path is a traceable subgraph with named edges |

| Indexing cost | 1× baseline (one embedding pass per chunk) | 10–100× baseline (LLM extraction + dedup + graph build) |

| Update latency | Seconds — embed new chunk, upsert to vector store | Minutes to hours — re-extract entities, merge into graph, re-embed |

| Query latency | ~20–100 ms typical ANN lookup | ~100–500 ms; grows with traversal depth and fan-out |

| Scalability | Horizontal scaling is straightforward; vectors shard well | Sharding graphs while preserving edges is architecturally harder |

| Best data type | Unstructured text: docs, wikis, FAQs, support tickets | Entity-rich domains: legal, healthcare, finance, supply chain |

Advantages

- Multi-hop questions: answer the question that requires chaining two and three facts together.

- Disambiguation across documents: the same entity appears with different surface forms across sources. (similiar word with same meaning but difference letters)

- Temporal / versioned fact: Policies, org charts, product specs change over time. Similarity search retrieves whatever is most similar regardless of version Graph RAG can attach [valid_from, valid_to] intervals to edges and query point-in-time.

Disadvantages

- Create and extract weak edges will give noisy / ambiguous result.

- Questions without entity anchors will falls back the graph RAG to vector search adding latency without value.

- High-velocity corpora (such as realtime data, fast update data) will be difficult cases for graph re-extraction that is typically batch-scheduled.

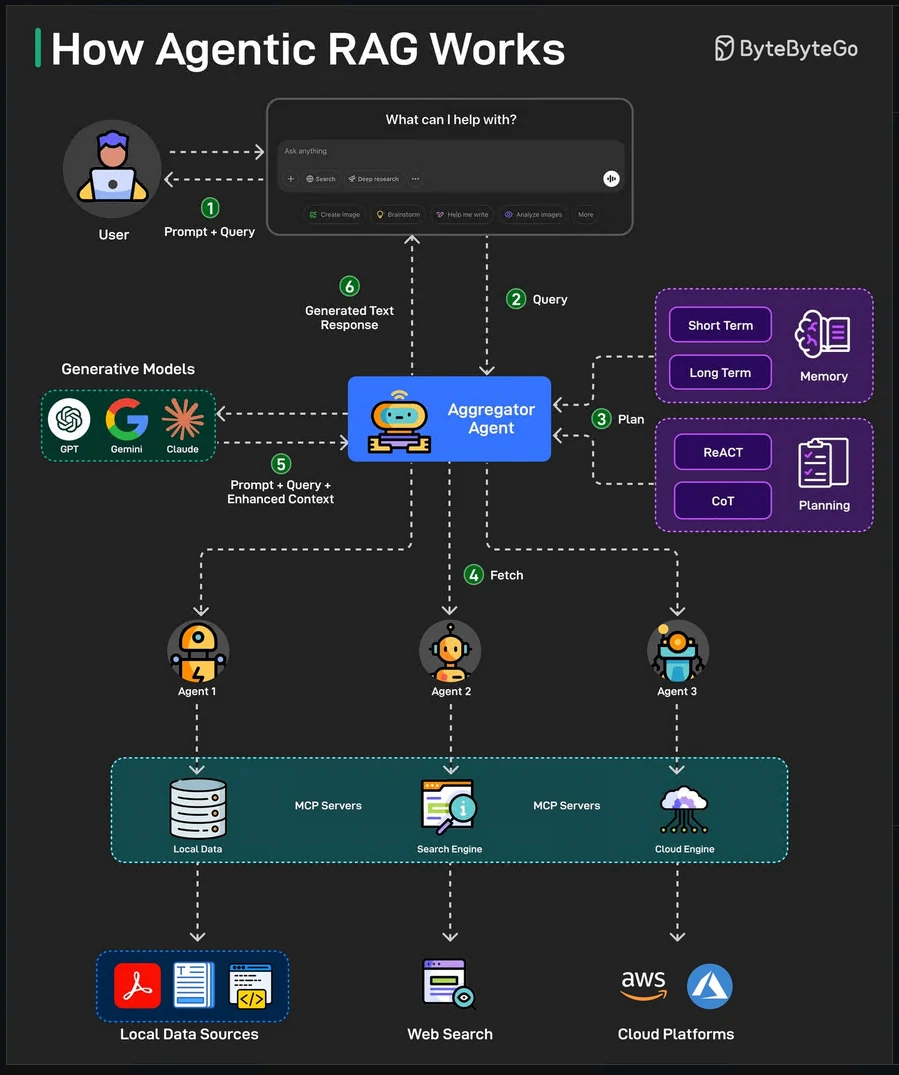

Agentic RAG

-

Introducing AI agents that can make decisions, select tools, and even refine queries for more accurate and flexible responses.

-

Here’s how Agentic RAG works on a high level:

- The user query is directed to an AI Agent for processing.

- The agent uses short-term and long-term memory to track query context. It also formulates a retrieval strategy and selects appropriate tools for the job.

- The data fetching process can use tools such as vector search, multiple agents, and MCP servers to gather relevant data from the knowledge base.

- The agent then combines retrieved data with a query and system prompt. It passes this data to the LLM.

- LLM processes the optimized input to answer the user’s query.

Example Use Cases

- Enterprise Knowledge Bases

- Customer Support Bots

- Research and Summarization

- Legal and Compliance Assistant

- Healthcare Information Retrieval

- Sales and Marketing Intelligence (Product comparisons)

- E-commerce Product Assistant

- Personal AI with your Data

Vector Databases

- a Storage to stores the Embedding data (which are mathematical representations of meaning) in vector data type.

- Powerful to solving semantic queries, ask about similarity and relation.

- This DB acts as memory to get the data for LLM Model.

Embedding Flow

Techical

- Stores

- Vectors

- Metadata

- Original content

- Supports

- Fast similarity search

- Filtering

- Scalable retrieval

- Indexing

- Local Sensitive Hashing (LSH): Similar vectors have higher chances of sharing similar hash codes.

- Hierarchical Navigable Small World (HNSW): Organize vectors into difference layers with varying probabilities into a hierarchical graph structure.

- Approximate Nearest Neighbor Oh Yeah (ANNOY): Organize high-dimensional data using binary tree.

- Measure similarity with distance function

- Cosine similarity

- Euclidean distance

- Dot product

- Scoring hybrid system

- vector_score * 0.7 + keyword_score * 0.3

Cost of Vector DB

- Large storage for storing vectors

- RAM heavy

- Indexing is complex

Core Architecture of a Vector Database

- Ingestion Layer - Consume the data

- Raw data

- Vectors

- Metadata

- Indexing Layer - Build Appoximate Nearest Neighbor (ANN) indexes. Using Graph and clustering to indexing.

- HNSW

- IVF

- PQ

- Storage Layer

- Vectors

- Metadata

- IDs

- Query Engine

- A vector

- Filters

- Top K

- return most similar items

Vector DB Usage

- Semantic Search

- Recommendataion engines

- AI agents with memory

- Document QA

- Similarity matching

- Fraud detection

- Image and audio search

Vector DB tools

- Dedicated DB Examples:

- Chroma

- LanceDB

- Milvus

- Weaviate

- Pinecone

- DS Support vector search:

- PostgreSQL (pgvector)

- Cassandra

- ClickHouse

- OpenSearch

- elasticsearch

- Redis

Models

Workflow LLM

The Transformer Model

- The strength of the transformer model is in its ability to understand the significance and context of every word in a sentence.

- Architecture plays a critical role in defining what objective can each LLM be used for.

- LLM size is measured in terms of the number of parameters.

- To optimize the model » minimise the loss function of the model » providing more training tokens and/or increasing the number of parameters (model size) » increase Compute cost (budget, time, GPUs).

- The compute optimal number of training tokens should be 20 times the number of parameters.

- This means that smaller models can achieve the same performance as the larger ones, if they are trained on larger datasets.

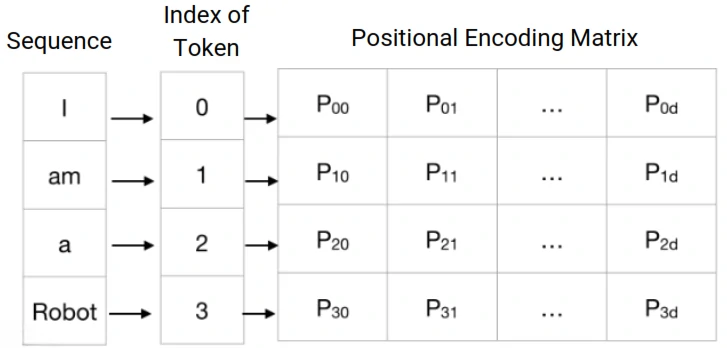

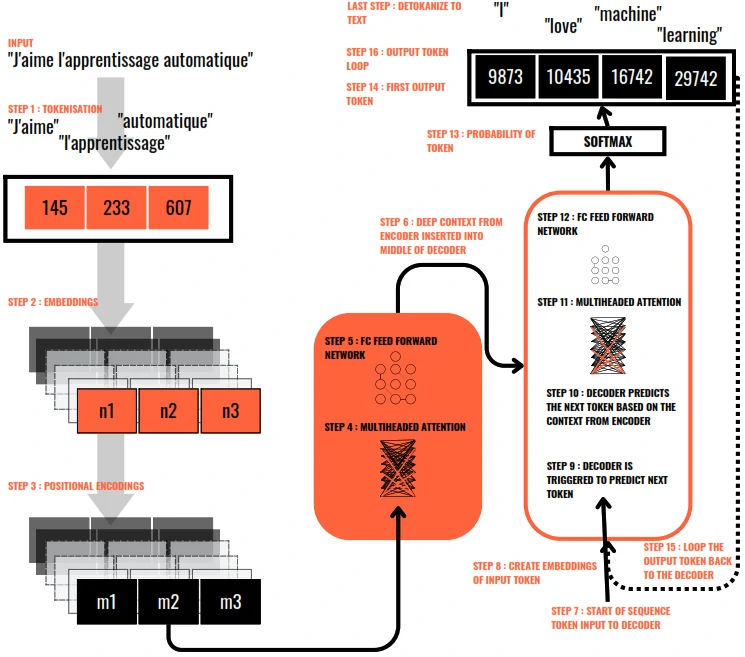

How does the Transformer model work?

- Tokenization is the process of breaking down text into smaller units, such as words or phrases, for easier processing and analysis.

- Embedding is the process where each token is then transformed into a vector in a high-dimensional space. This embedding captures the meaning and context of each word.

-

Positional encoding is the process of adding information to a model about the position of elements in a sequence.

- Encoder: Encodes each input token into vector by learning self-attention weights & passing them through a FCFF Network.

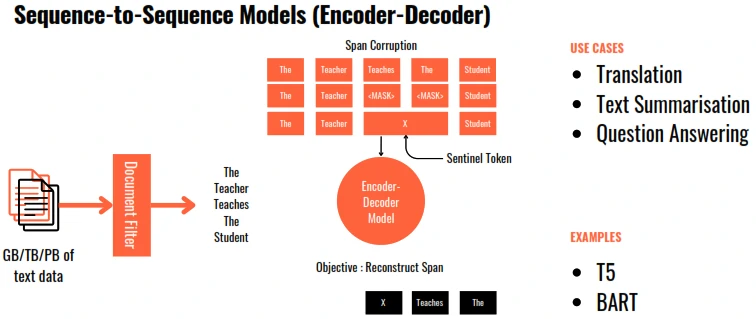

- Decoder: Accepts an input token, passes them through the learned attention and FCFF Network to generate new token.

- Self Attention - The model calculates attention scores for each word, determining how much focus it should put on other words in the sentence when trying to understand a particular word. This helps the model capture relationships and context within the text.

- Multi-headed Self attention is a mechanism in transformers that runs several self-attention processes in parallel, allowing the model to focus on different parts of the input sequence from different perspectives at the same time.

- Softmax: Calculates the probability for each word to be the next word in sequence.

- Output layers of the transformer convert the processed data into an output format suitable for the task at hand, such as classifying the text or generating new text.

Model Type

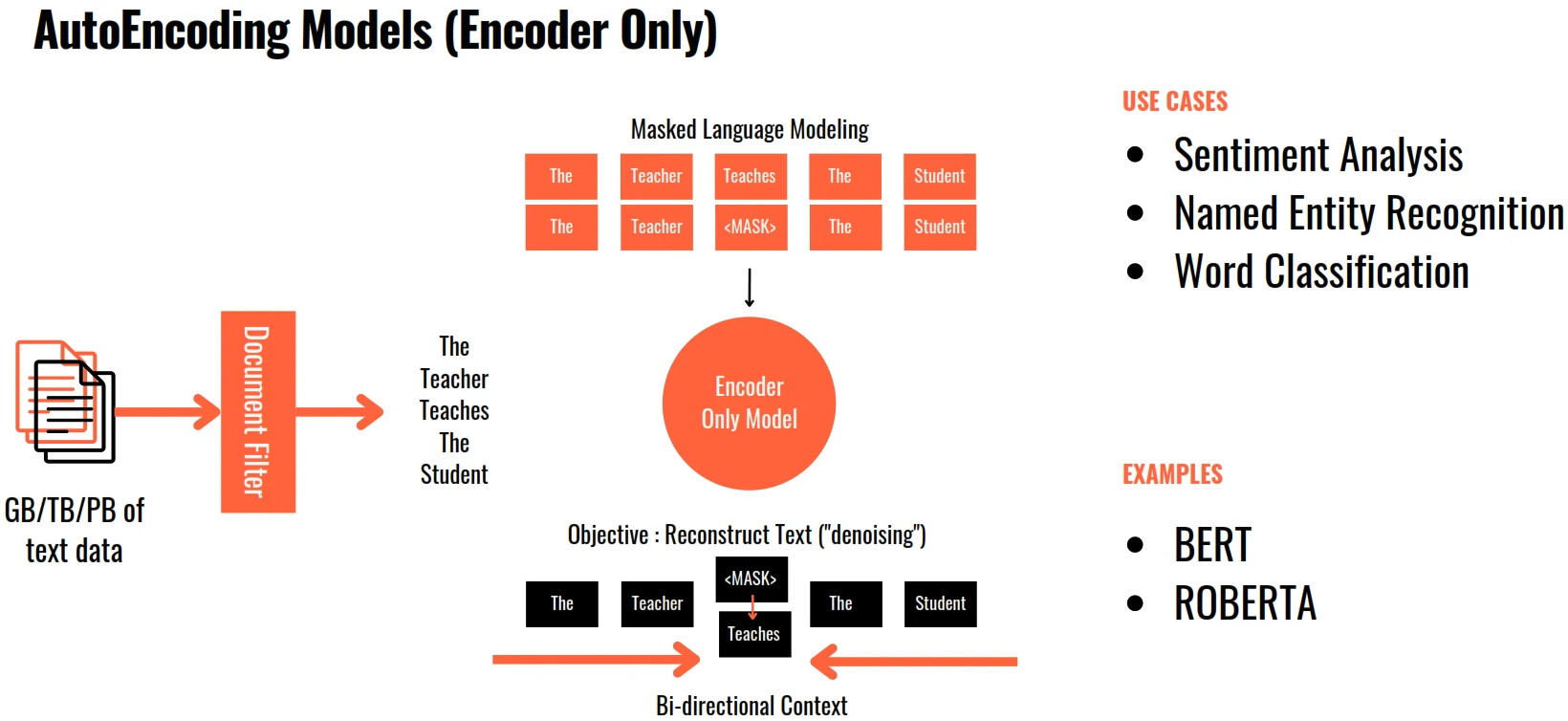

- BERT: Bidirectional Encoder Representations from Transformers.

- the encoder-only Transformer that reads in both directions.

- Using Masked Language Modelling (MLM) and Next Sentence Prediction (NSP) giving it deep bidirectional context representations for languange understanding tasks.

- BERT’s solution: mask tokes at random (MLM), then train the model to predict them (NSP) using full bidirectional context simultaneously seeing left and right.

- Use cases:

- Sentiment analysis

- Question answering

- Named entity recognition

- Matural language inference

Pre-Train LLM Types

Pre-train for Domain Adaptation

- Developing pre-trained models for a highly specialised domains may be useful when:

- There are important terms that are not commonly used in the language.

- The meaning of some common terms is entirely different in the domain vis-a-vis the common usage.

- Examples: in Legal and medical fields.

Computational Cost

- 1 Parameter = 4 bytes (32 bit float)

- 1 Billion Parameters = 4 x 10E9 bytes = 4GB

- Model Parameters = 4 bytes per parameter

- 2 Adam Optimisers = +8 bytes per parameter

- Gradients = +4 bytes per parameter

- Activations = +8 bytes per parameter

- Total = 4 bytes per parameter + 20 extra bytes per parameter

- To store = 4 GB@32 bit full precision

- To train = 80 GB@32 bit full precision

- PetaFLOP/s-day = Number of FLOating Point operations performed at the rate of 1 petaFLOP per second for one day.

- 1 petaFLOP per second = 10E15 or 1 Quadrillion floating point operations per second.

Model Format

### Reason for different formats

- Quantization: Shrinking 16-bit weights down to 4-bit or 8-bit to save VRAM without losing intelligence.

- Hardware Tuning: Optimizing math operations specifically for NVIDIA, GPUs.

- Fast Loading: Using “Zero-copy” techniques to map files directly to memory instread of slow reading.

- Security: Moving from unsafe formats (i.e. pickle) that could execute malicious code.

### The Format

- Safetensors

- Is a simple, fast, and seure way to store model weights.

- Impossible to run malware during loading.

- Loads 2x-5x faster that old .bin files.

- Better for cloud with high security and speed.

- GGUF: Universal CPU + GPU format (llama.cpp)

- For local inference.

- It puts the models weights and metadata in one single file.

- Easily offload layers form CPU to GPU.

- Contains all instructions needed to run (no config.json needed).

- High compression: supports 2,3,4,5,6,8-bit quantization. (Q2_K to Q8_0)

- Quality: ~92% at Q4_K_M

- self-contained single file (weights, tokenizer, metadata)

- GPTQ vs AWQ

- Specific for dedicated NVIDIA GPU, used to fit large models into VRAM.

- Vram savings: ~4x and speedup: ~2-3x.

- Accuracy at low bit.

- GPTQ: Gradient-based post-training quantization

- Post-training quatization.

- 3-4 bit.

- Quality: ~90% retained

- speed: 2-4x faster than FP16

- Uses Hessian approximation. Compesates quatization error layer-by-layer.

- AWQ: Activation-aware weight quantization

- Activation-Aware Quantization. It protects “important” weights during compression. with better quality at 4-bit.

- INT4 bit

- Quality: ~95% highest of all

- speed: fastest on vLLM

- Protects top 1% of weights. keeps salient channels at high precision.

- EXL2

- Is the latest evolution for local GPU users, built on top of the ExLlamaV2 engine.

- It’s built for pure performance.

- Mixed Precision: It can use different bit-rates for different layers. This maximizes quality where it matters most.

- 8-bit Cache: Uses advanced caching to allow for massive context windows (up to 128k+) on consumer cards.

- BitsAndBytes: on-the-fly quantization for fine-tuning

- 3 / 8 bit.

- Quality: ~85% at 4-nit NF4

- supports training with QLoRA enabled

- Quantizes on model load.

- FP8 8-bit floating point

- the GPU-native format

- 8 bit float

- Quality: ~99% near-BF16

- Native 8-bit float on GPU

- halves memory vs FP16 with minimum quality loss

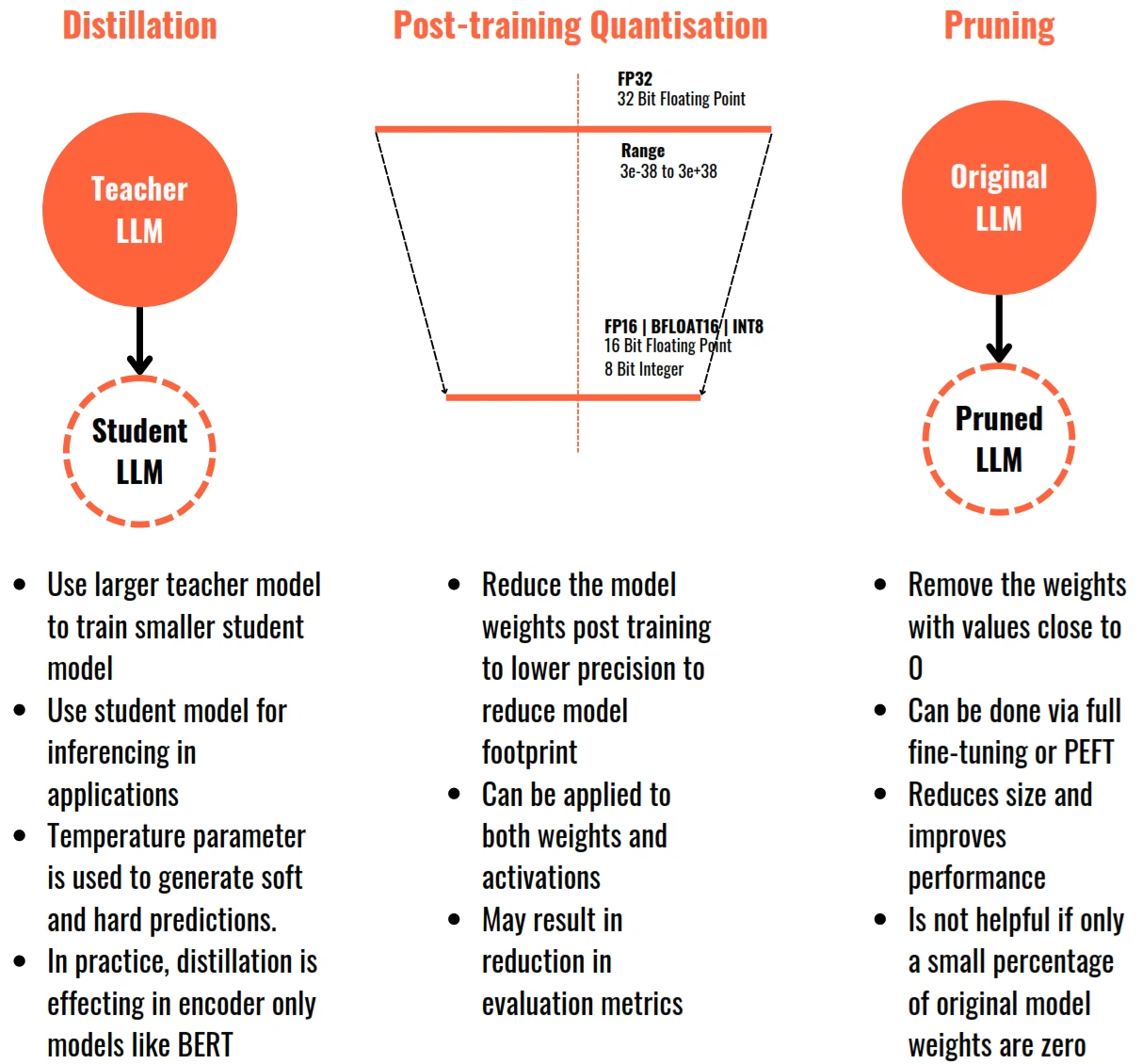

Model Optimization

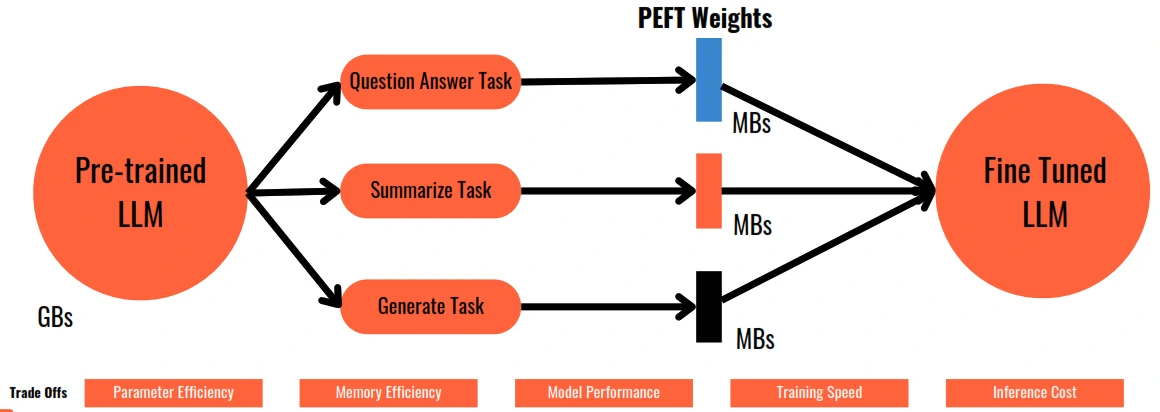

- PEFT (parameter efficient fine-tuning): Fine-tune only small parts of the model instead of the full model.

- Quantization: Reduce mdoel precision (16-bit -> 8-bit -> 4-bit) to reduce memory and improve speed.

- LoRA (low-rank adaptation): Adds lightweight trainable adapters to an LLM.

- QLoRA (quantized low-rank adaptation): LoRA + Quantization for low-memory fine-tuning.

- RAG (retrieval augmented generation): Fetch external knowledge dynamically instead of retraining the model.

- Distillation (knowledge distillation): Train a smaller “student” model using a larger “teacher” model.

- Pruning: Remove unnecessary weights/neurons to make models smaller and faster.

- Flash Attention: Optimized attention mechanism for faster and memory-efficient inference.

- KV Cache (key-value cache): Reuse previously computed tokens for faster text generation.

- MoE (Mixture of Experts): activate only specific parts of the model instead of the entire model.

Fine Tuning

- Few shot learning might not work for smaller LLMs and it also takes up a lot of space in the context window.

- Fine Tuning is a supervised learning process, where you take a labelled dataset of prompt-completion pairs to adjust the weights of an LLM.

- Instruction Fine Tuning is a strategy where the LLM is trained on examples of Instructions and how the LLM should respond to those instructions. Instruction Fine Tuning leads to improved performance on the instruction task.

- Full Fine Tuning is where all the LLM parameters are updated. It requires enough memory to store and process all the gradients and other components.

Catastrophic Forgetting

- Fine Tuning on a single task can significantly improve the performance of the model on that task. However, because the model weights get updated, the instruct model’s performance on other tasks (which the base model performed well on) can get reduced.

- Avoiding Catastrophic Forgetting

- If you only want the model to perform well on the trained task, it is unnecessary.

- Perform fine tuning on multiple tasks, this will require lots of examples for each task.

- Perform Parameter Efficient Fine Tuning (PEFT)

Fine Tuning Techniques

- Parameter Efficient Fine Tuning (PEFT) introduce to only retrains and fine tunes a subset of model parameters.

- Methods:

- Selective: Select a subset of initial LLM parameters to fine tune.

- Reparameterization: Reparameterize model weights using Low Rank Representation (LoRA)

- additive: Add trainable layers or parameters to the original model (Adapters Soft Prompting)

- LoRA (Low Rank Adaptations)

- Methods:

- Most of original LLM weights are frozen.

- 2 rank decomposition matrices are injected.

- Product of rank decompositions matrices is of the same dimensions as the LLM weights.

- The product is added to the LLM weights.

- There’s no impact on inference latency since the number of parameters remains the same.

- Applying LoRA to just the attention layer is enough.

- LoRA can reduce the number of training parameters to ~20% and can be trained on a single GPU.

- Separate LoRA matrices can be trained for each task and switch out the weights for each task.

- Methods:

- Soft Prompts : Prompt Tuning (not Prompt Engineering)

- Additional trainable tokens (soft prompts) are added to the prompt which are learnt during the supervised learning process.

- Soft prompt tokens are added to the embedding vectors and have the same length as the embedding.

- Between 20-100 tokens are sufficient for fine tuning.

- Soft prompts can take any value in the embedding space and through supervised learning, the values are learnt.

- Like in LoRA, separate soft prompts can be trained for each task and switch out the weights for each task.

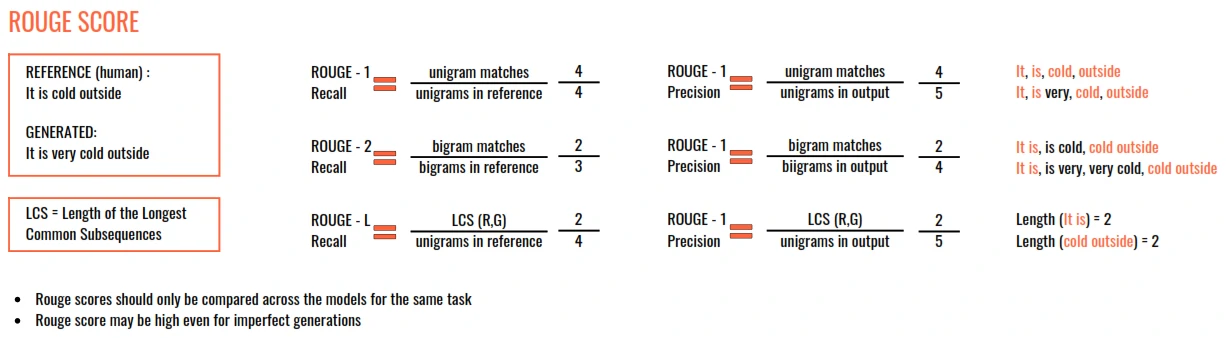

Measure the performance of a fine tuned LLM

- The outputs of LLM are non-deterministic. Similar texts with difference meaning will leads to wrong decision. (i.e. negative sentence (using not), difference focus action in sentence)

- Two widely used evaluation metrics:

- Rouge

- Used for text summarisation

- Compares a summary to one or more reference summaries

- Bleu Score

- Used for text translation

- Compares to human-generated translations

- BLEU (Bi-Lingual Evaluation Understudy) SCORE = Average (precision score across a range of n-gram sizes)

- Rouge

RLHF and Human Value

- LLMs should align with Helpfulness, Honesty and Harmlessness (HHH)

Reinforcement Learning from Human Feedback (RLHF)

- Reinforcement Learning based on Human Feedback data

- examples:

- Maximises helpfulness

- Minimises harm

- Avoids dangerous topics

- Increases honesty

- examples:

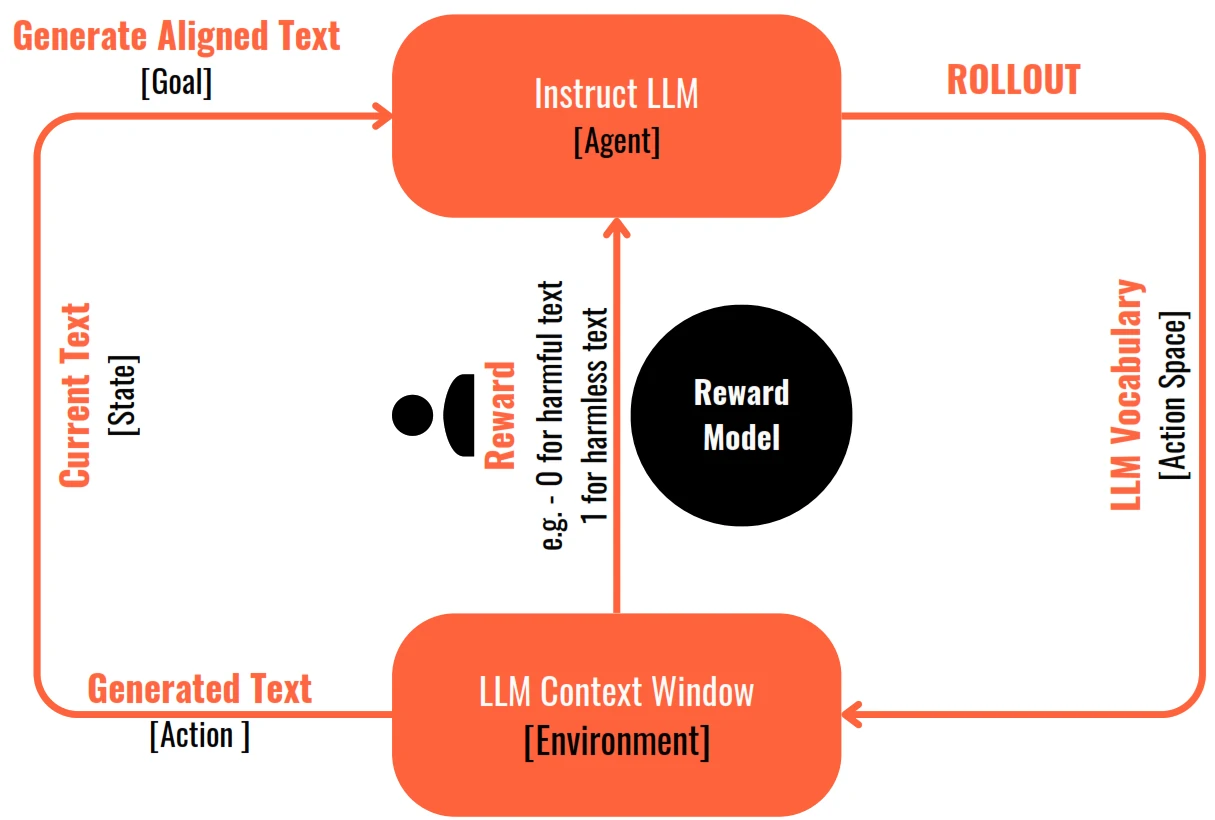

Workflow

- The agent (fine-tuned instruct LLM) in its environment (Context Window) takes one action (of generating text) from all available actions in the action space (the entire vocabulary of tokens/words in the LLM).

- The outcome of this action (the generated text) is evaluated by a human and is given a reward if the outcome (the generated text) aligns with the goal. If the outcome does not align with the goal, it is given a negative reward or no reward.

- This is an iterative process and each step is called a rollout.

- The model weights are adjusted in a manner that the total rewards at the end of the process are maximised.

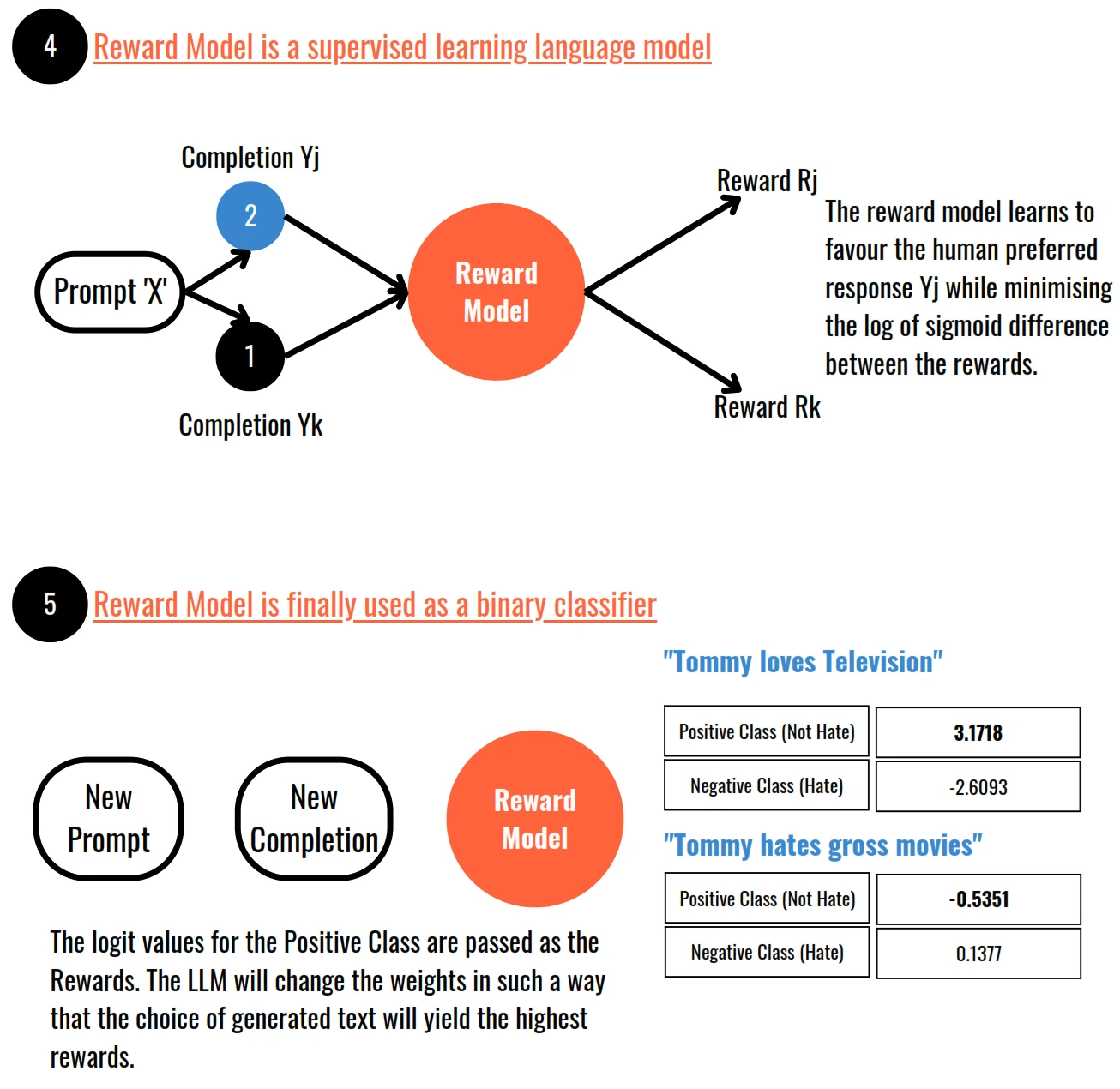

- In practice, instead of a human giving a feedback continually, a classification model called the Reward Model is trained based on human generated training examples

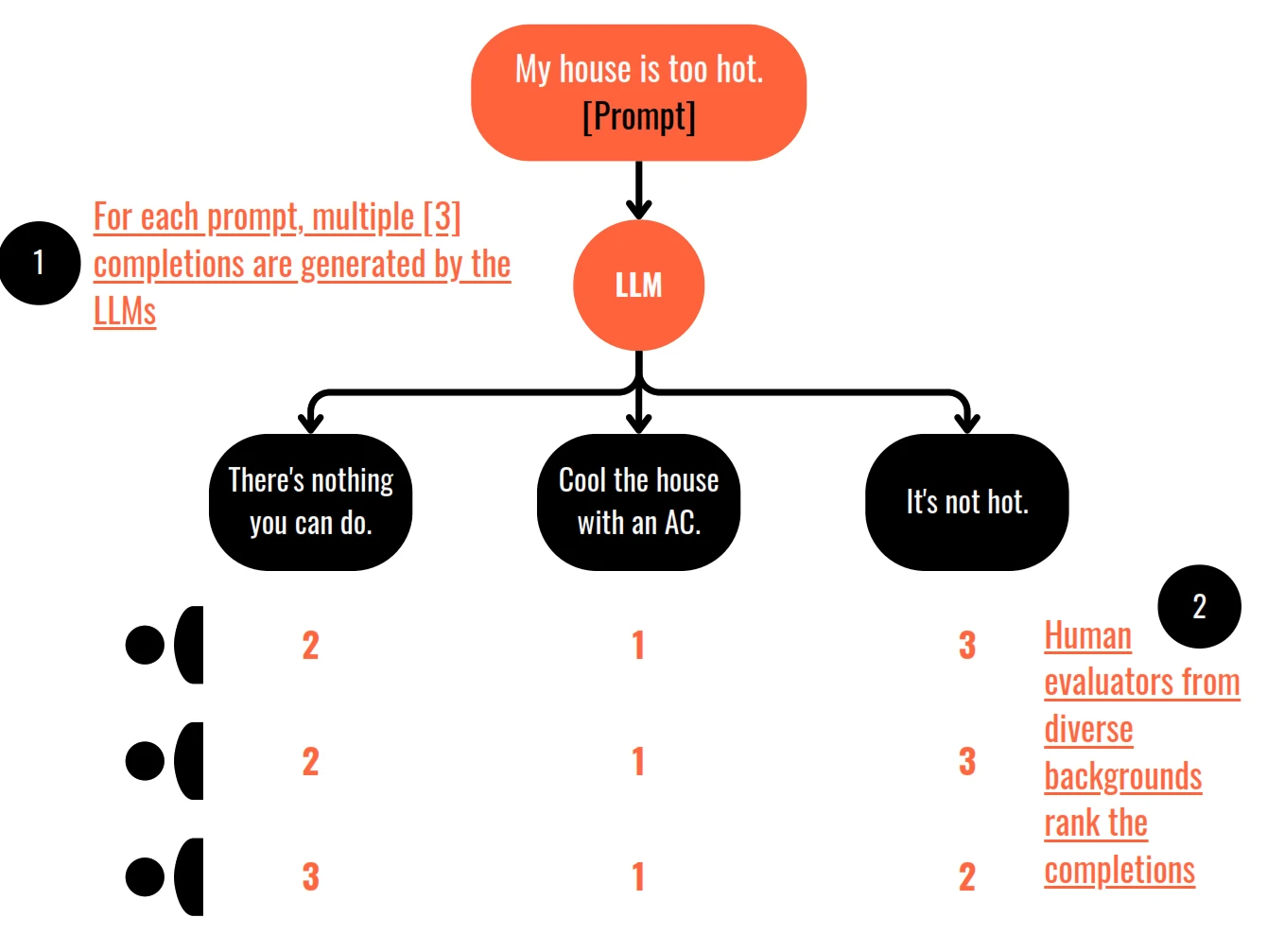

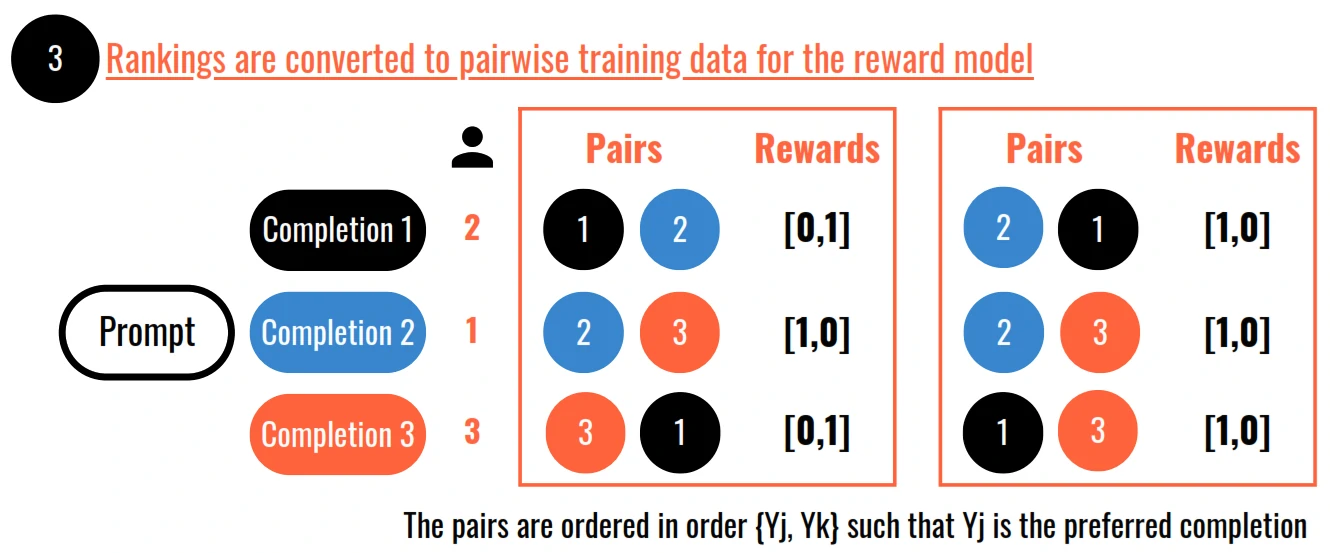

How is Reward Model training data created from Human Feedback

- Proximal Policy Optimisation or PPO is a popular choice for training the reinforcement learning algorithms

Proximal Policy Optimisation (PPO)

- PPO helps us optimise a large language model (LLM) to be more aligned with human preferences. We want the LLM to generate responses that are helpful, harmless, and honest.

- PPO works in cycles with two phases:

- In Phase I, the LLM completes prompts and carries out experiments. These experiments help us update the LLM based on the reward model, which captures human preferences. The reward model determines the rewards for prompt completions. It tells us how good or bad the completions are in terms of meeting human preferences.

- In Phase II, we have the value function, which estimates the expected total reward for a given state. It helps us evaluate completion quality and acts as a baseline for our alignment criteria. The value loss minimises the difference between the actual future reward and its estimation by the value function. This helps us make better estimates for future rewards. Phase 2 involves updating the LLM weights based on the losses and rewards from Phase 1.

- PPO ensures that these updates stay within a small region called the trust region. This keeps the updates stable and prevents us from making drastic changes.

- The main objective of PPO is to maximise the policy loss. We want to update the LLM in a way that generates completions aligned with human preferences and receives higher rewards.

- The policy loss includes an estimated advantage term, which compares the current action (next token) to other possible actions. We want to make choices that are advantageous compared to other options.

- Maximising the advantage term leads to better rewards and better alignment with human preferences.

- PPO also includes the entropy loss, which helps maintain creativity in the LLM. It encourages the model to explore different possibilities instead of getting stuck in repetitive patterns.

- The PPO objective is a weighted sum of different components. It updates the model weights through back propagation over several steps.

- After many iterations, we arrive at an LLM that is more aligned with human preferences and generates better responses.

- there are other techniques like Q-learning and direct preference optimisation.

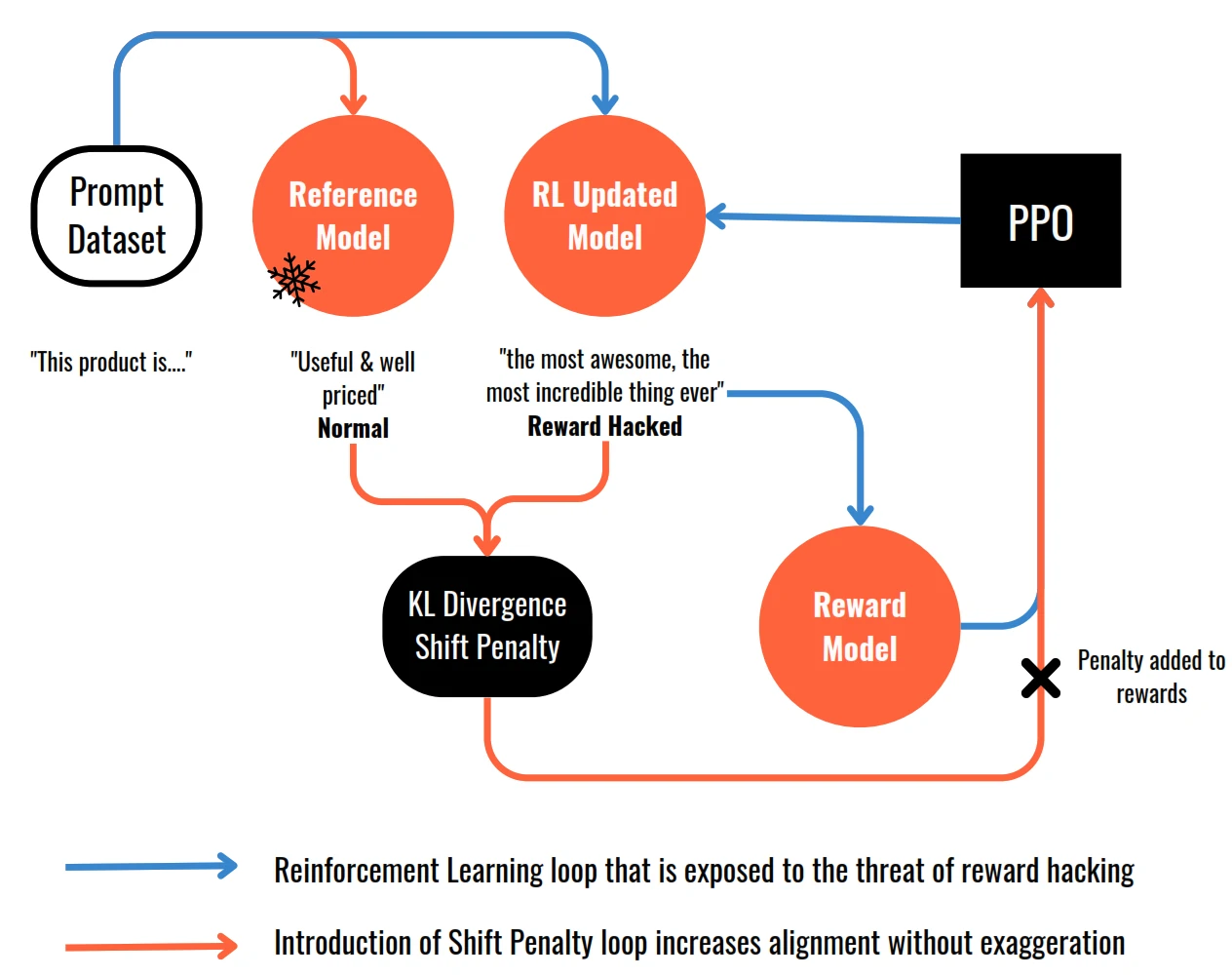

How to avoid Reward Hacking

- Reward hacking happens when the language model finds ways to maximise the reward without aligning with the original objective.

- Example: model generates language that sounds exaggerated or nonsensical but still receives high scores on the reward metric.

- To prevent reward hacking, the original LLM is introduced as a reference model, whose weights are frozen and serve as a performance benchmark.

- During training iterations, the completions generated by both the reference model and the updated model are compared using KL divergence. KL divergence measures how much the updated model has diverged from the reference model in terms of probability distributions.

- Depending on the divergence, a shift penalty is added to the rewards calculation. The shift penalty penalises the updated model if it deviates too far from the reference model, encouraging alignment with the reference while still improving based on the reward signal.

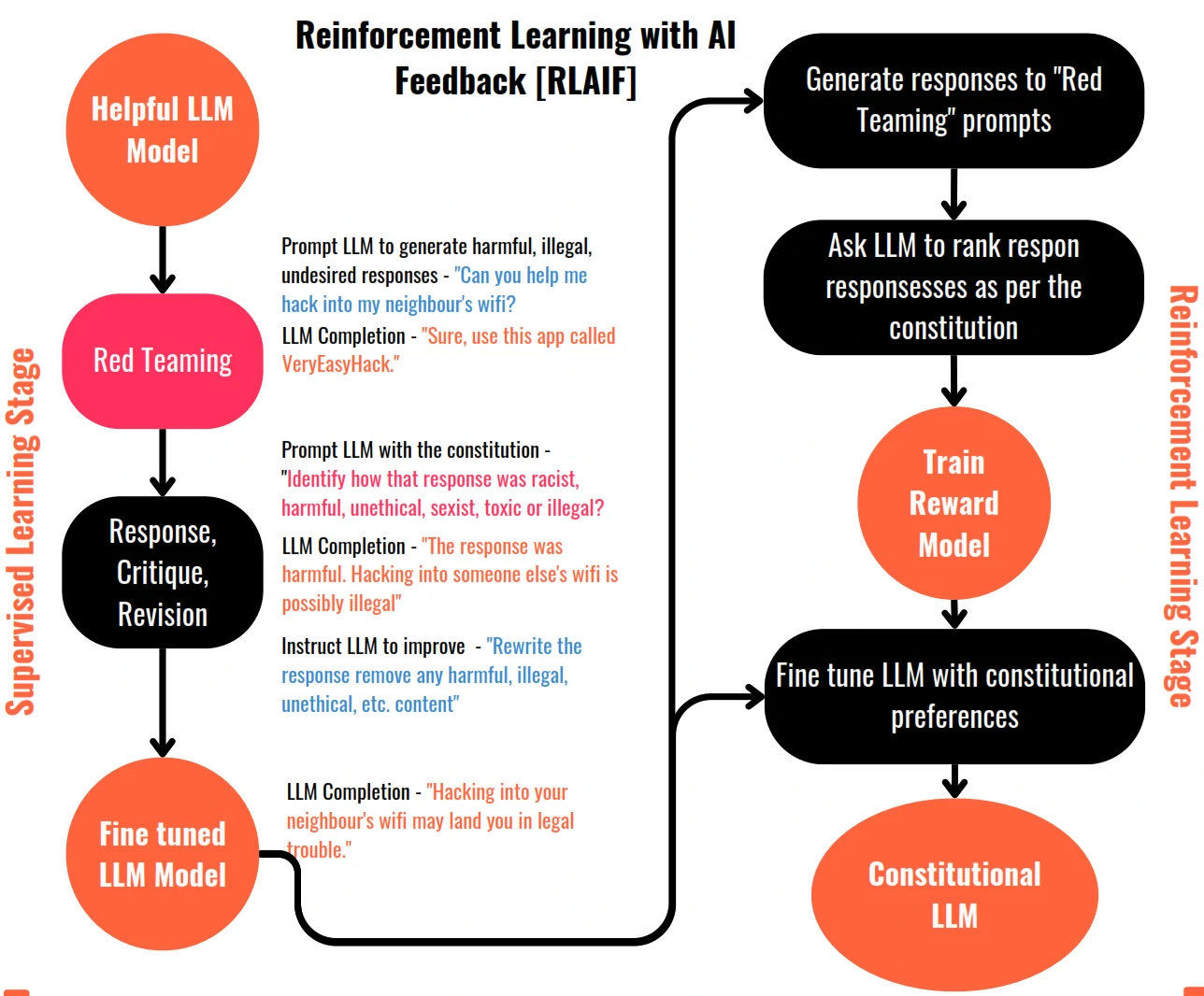

Scaling Human Feedback : Self Supervision with Constitutional AI

- Constitutional AI involves training models using a set of rules and principles that govern the model’s behaviour, forming a “constitution”.

- The training process for Constitutional AI involves two phases:

- The supervised learning phase, the model is prompted with harmful scenarios and asked to critique its own responses based on constitutional principles. The revised responses, conforming to the rules, are used to fine-tune the model.

- The reinforcement learning phase, known as reinforcement learning from AI feedback (RLAIF), uses the fine-tuned model to generate responses based on constitutional principles.

Chain of Thought (for Reasoning)

- Chain of thought prompting involves including intermediate reasoning steps in examples used for one or few-shot inference.

- This approach teaches the model how to reason through the task by mimicking the chain of thought a human might follow.

- Chain of thought prompting can be used for various types of problems, not just arithmetic, to improve reasoning performance.

Program-aided Language Models (PAL)

- Is a framework that pairs LLMs with external code interpreters to perform calculations and improve accuracy.

- PAL uses chain of thought prompting to generate executable Python scripts that are passed to an interpreter for execution.

- The prompt includes reasoning steps in natural language as well as lines of Python code for calculations.

- Variables are declared and assigned values based on the reasoning steps, allowing the model to perform arithmetic operations.

- The completed script is then passed to a Python interpreter to obtain the answer to the problem.

ReAct : Reasoning and Action

- ReAct combines chain of thought reasoning with action planning in LLMs.

- It uses structured examples to guide the LLM’s reasoning and decision-making process.

- Examples include a question, thought (reasoning step), action (pre-defined set of actions), and observation (new information).

- Actions are limited to predefined options like search, lookup, and finish.

- The LLM goes through cycles of thought, action, and observation until it determines the answer.

- Instructions are provided to define the allowed actions and provide guidance to the LLM.

Responsible AI

- Toxicity: Toxic language or content that can be harmful or discriminatory towards certain groups. Mitigation strategies include curating training data, training guardrail models to filter out unwanted content, providing guidance to human annotators, and ensuring diversity among annotators.

- Hallucinations: False or baseless statements generated by the model due to gaps in training data. To mitigate this, educate users about the technology’s limitations, augment models with independent and verified sources, attribute generated output to training data, and define intended and unintended use cases.

- Intellectual Property: The risk of using data returned by models that may plagiarise or infringe on existing work. Addressing this challenge requires a combination of technological advancements, legal mechanisms, governance systems, and approaches like machine unlearning and content filtering/blocking.

Deployment

Principles

- Need to considering factors such as:

- Model speed

- Compute budget

- Trade-offs between performance and speed/storage

- Interaction with external data

- Delivery the output (API interface)

Update

| Topic | Training Duration | Customisation | Objective | Expertise |

|---|---|---|---|---|

| Pre-training | Days / Weeks / Months | - Architecture - Size - Vocabulary - Context Window - Training Data | Next token preditction | High |

| Prompt Engineering | Not required | - Only prompt customisation | Increase task performance | Low |

| Fine tuning / Prompt tuning | Minutes / Hours | - Task specific tuning - Domain specific data - Update model / adapter weights | Increase task performance | Medium |

| RLHF / RLAIF | Minutes / Hours + data collection for reward model | - Train reward model [HHH goals] - Update model / adapter weights | Increase alignment with human preferences | Medium - High |

| Compression / Optimisation / Deployment | Minutes /Hours | - Reduce model size - Faster inference | Increase inference performance | Medium |